| Classification | ||

| Forecasting | ||

| misc | ||

| LICENSE | ||

| README.md | ||

TSLANet: Rethinking Transformers for Time Series Representation Learning [Paper] [Cite]

by: Emadeldeen Eldele, Mohamed Ragab, Zhenghua Chen, Min Wu,and Xiaoli Li

Abstract

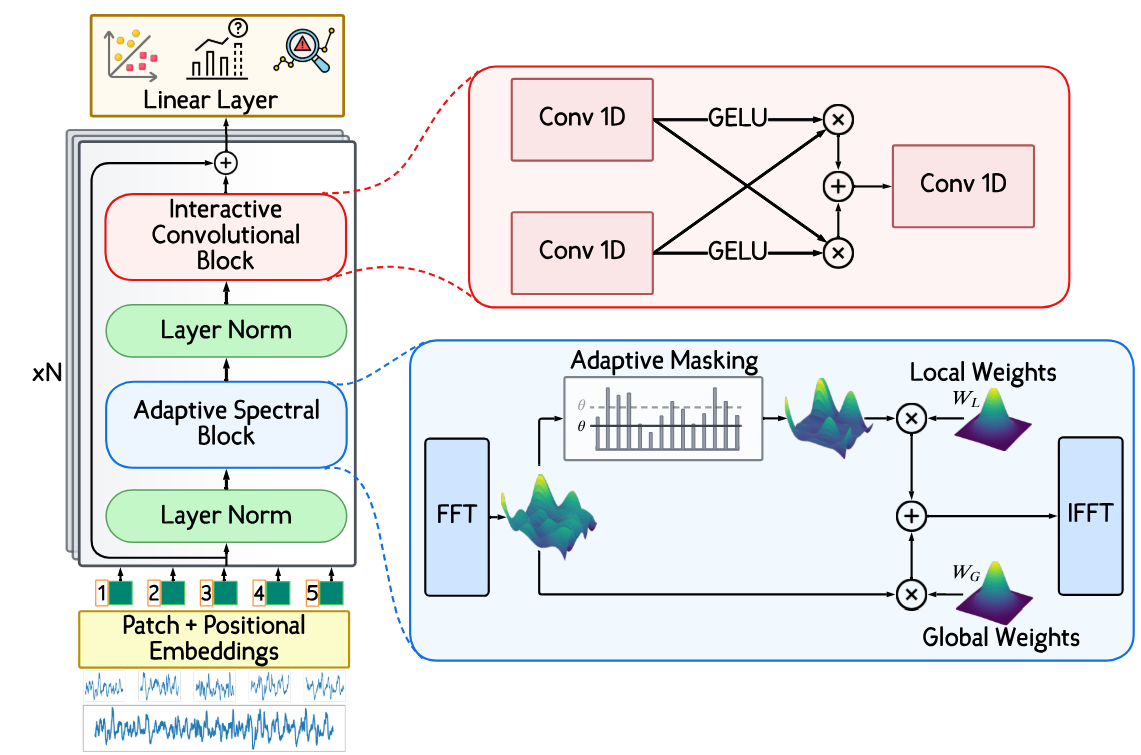

Time series data, characterized by its intrinsic long and short-range dependencies, poses a unique challenge across analytical applications. While Transformer-based models excel at capturing long-range dependencies, they face limitations in noise sensitivity, computational efficiency, and overfitting with smaller datasets. In response, we introduce a novel Time Series Lightweight Adaptive Network (TSLANet), as a universal convolutional model for diverse time series tasks. Specifically, we propose an Adaptive Spectral Block, harnessing Fourier analysis to enhance feature representation and to capture both long-term and short-term interactions while mitigating noise via adaptive thresholding. Additionally, we introduce an Interactive Convolution Block and leverage self-supervised learning to refine the capacity of TSLANet for decoding complex temporal patterns and improve its robustness on different datasets. Our comprehensive experiments demonstrate that TSLANet outperforms state-of-the-art models in various tasks spanning classification, forecasting, and anomaly detection, showcasing its resilience and adaptability across a spectrum of noise levels and data sizes.

Citation

If you found this work useful for you, please consider citing it.

@article{tslanet,

title = {TSLANet: Rethinking Transformers for Time Series Representation Learning},

author = {Eldele, Emadeldeen and Ragab, Mohamed and Chen, Zhenghua and Wu, Min and Li, Xiaoli},

journal = {arXiv preprint arXiv:2404.08472},

year = {2024},

}

Acknowledgements

The codes in this repository are inspired by the following:

- GFNet https://github.com/raoyongming/GFNet

- Masking task is from PatchTST https://github.com/yuqinie98/PatchTST

- Forecasting and AD datasets are downloaded from TimesNet https://github.com/thuml/Time-Series-Library